Agentic AI Governance: It Fails at System Assumptions

Agentic AI governance for mission-critical software: a three-tier model, ADR boundaries, and why AI fails at system assumptions, not syntax.

9 min read

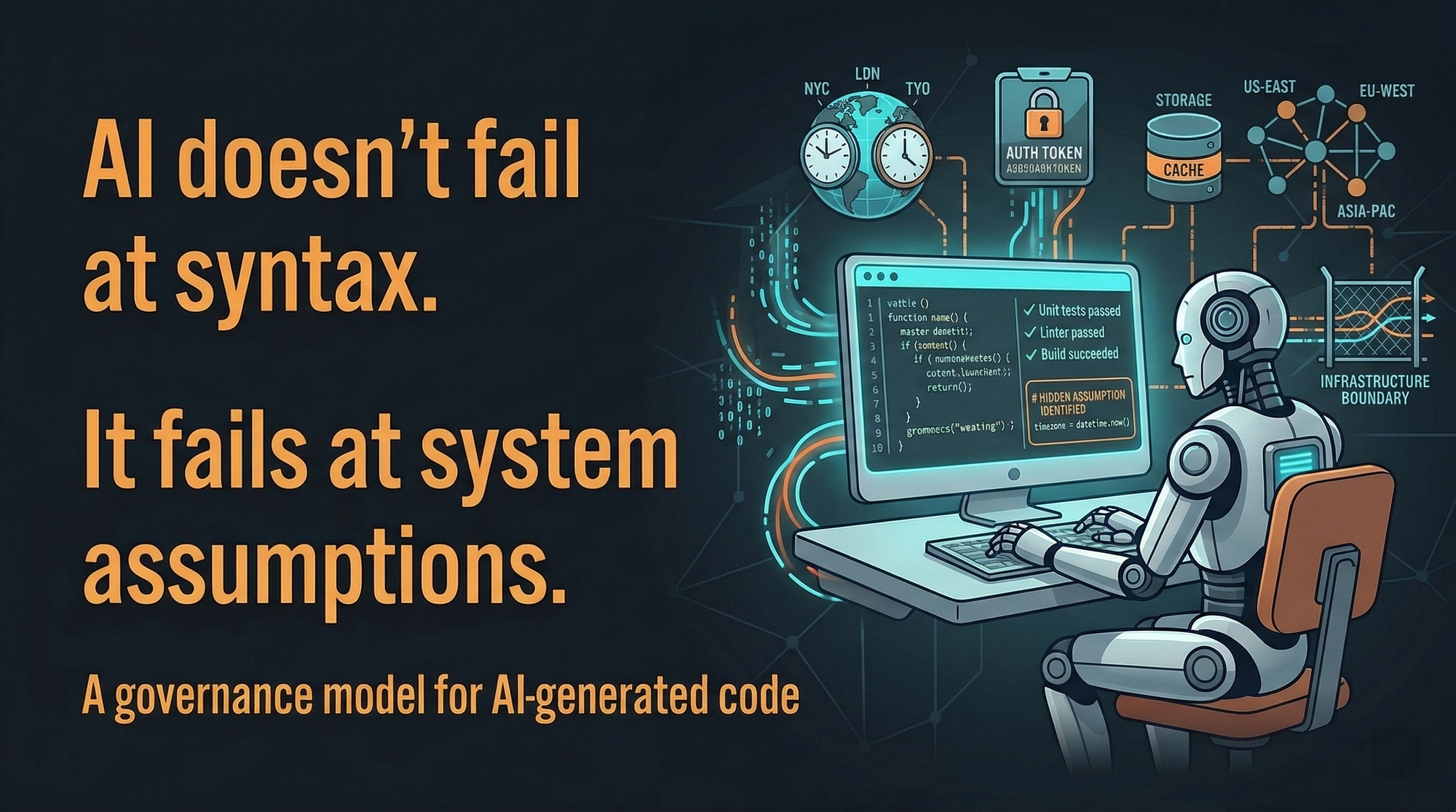

Agentic AI does not introduce new classes of bugs.

It amplifies assumptions that were never made explicit.

The better the model, the more convincing the output — and the harder the failure is to detect.

In production systems, these failures don’t look like hallucinations.

They pass review.

They pass tests.

They ship.

Then they surface at integration boundaries, under load, or during incidents — where blast radius is non-trivial.

The pattern isn’t prompt quality.

It’s contextual blindness at system boundaries.

The model solves the problem it was given.

It just doesn’t know the invariants it was never told.

This is the problem the Deterministic AI Factory is designed to solve.

The sandbox is real. Use it.

Before I describe what breaks, I want to be clear about what works.

Conversational AI is the most efficient R&D interface I’ve ever used. A recent example: I needed to figure out whether an agent could navigate Google Ads (explore campaign structures, take screenshots of ad previews) without triggering detection or getting flagged. We built it iteratively in chat: probe, adjust, probe again. We had a working solution in about an hour. Solo, that’s a day of trial and error, getting flagged, and starting over. Chat compresses discovery time without requiring architectural commitment up front.

In this mode, AI is a thought accelerator. I’m not shipping anything. I’m thinking. And for that, it’s exceptional.

The problem starts when thinking becomes doing, and no one draws the line. The same model, the same interface, the same workflow. But at some point, the output stops being a sketch and starts being something that runs in production, touches real data, or makes real decisions. And that shift changes everything about what a mistake costs.

There’s a threshold. It’s economic, not philosophical.

The liability doesn’t begin with a bad output. It begins at a specific threshold:

The cost of a hidden contextual error exceeds the time saved by generation.

That threshold moves depending on what the code touches. For most exploratory code, it never triggers. But once the generated output crosses into:

- persistent storage

- PII handling

- distributed system boundaries

- financial correctness

- concurrency assumptions

…ungoverned generation becomes risk amplification. You’re not saving time. You’re deferring the cost to a place where it’s much more expensive to pay.

The signal I use: Does this need end-to-end testing to know if it actually works? Unit tests aren’t enough. I need it running against real systems before I trust it. If the answer is yes, the governance scales up. That’s where the three tiers come from.

I use three tiers. Here’s the line between them.

Tier 1 is the sandbox. API exploration, pattern comparison, rapid prototyping. No ADR required. No compliance check. No persistence to production repos. The AI is a sandboxed collaborator, and I treat its output accordingly. If it’s wrong, I find out immediately, and it costs me nothing.

Tier 2 is internal tooling. CLI tools, observability utilities, and non-customer-facing automation. Before I write a line, I drop a markdown file: architecture, features, conventions, and unit tests are mandatory. It takes a minute. The AI follows it precisely, and the output quality jumps: not because the model got smarter, but because I gave it a boundary to work inside. Peer review before merge. If something breaks, it’s inconvenient. It’s not catastrophic.

Tier 3 is mission-critical. Transaction engines, audit logging, PII persistence, and regulatory workflows. If something breaks here, it’s not an inconvenience. It’s a systemic event. This tier gets mandatory ADRs, invariants defined before a single line of code is written, adversarial test suites, and developer sign-off.

| Tier | Context | ADR | Test Coverage | Human Gate |

|---|---|---|---|---|

| 1 | Discovery | None | None | None |

| 2 | Internal Tooling | Optional | Happy path | Peer review |

| 3 | Mission-Critical | Mandatory | Adversarial | Developer sign-off |

This isn’t bureaucracy. The more it can go wrong silently, the more structure it gets.

The ADR isn’t documentation. It’s the fence.

For Tier 3, I stopped treating Architecture Decision Records as just a record of past decisions. In this model, they serve two roles: they ground the AI in the constraints and reasoning that shaped the system so far, and they become the active boundary for every future AI iteration working inside it.

The ADR is the boundary of permissible logic. It defines what the AI is allowed to solve inside. It’s a fence, not a filing cabinet.

Here’s what that looks like in practice:

## ADR-007: PII Audit Logging

### Invariants

- [INV-1] No raw PII in logs. Masking occurs at the Service boundary via AuditPolicy.Redact().

- [INV-2] All timestamps use NodaTime (ISO-8601). No DateTime.UtcNow.

- [INV-3] Storage is immutable (WORM). No UPDATE or DELETE.The AI doesn’t propose architecture here. It solves inside these invariants. That distinction matters: the AI drafts the boundary, the developer owns it, and AI operates within it.

Once the invariants exist, we write tests against them before any implementation. Once the developer approves those tests, the AI isn’t allowed to touch them. Fix the code, or fail.

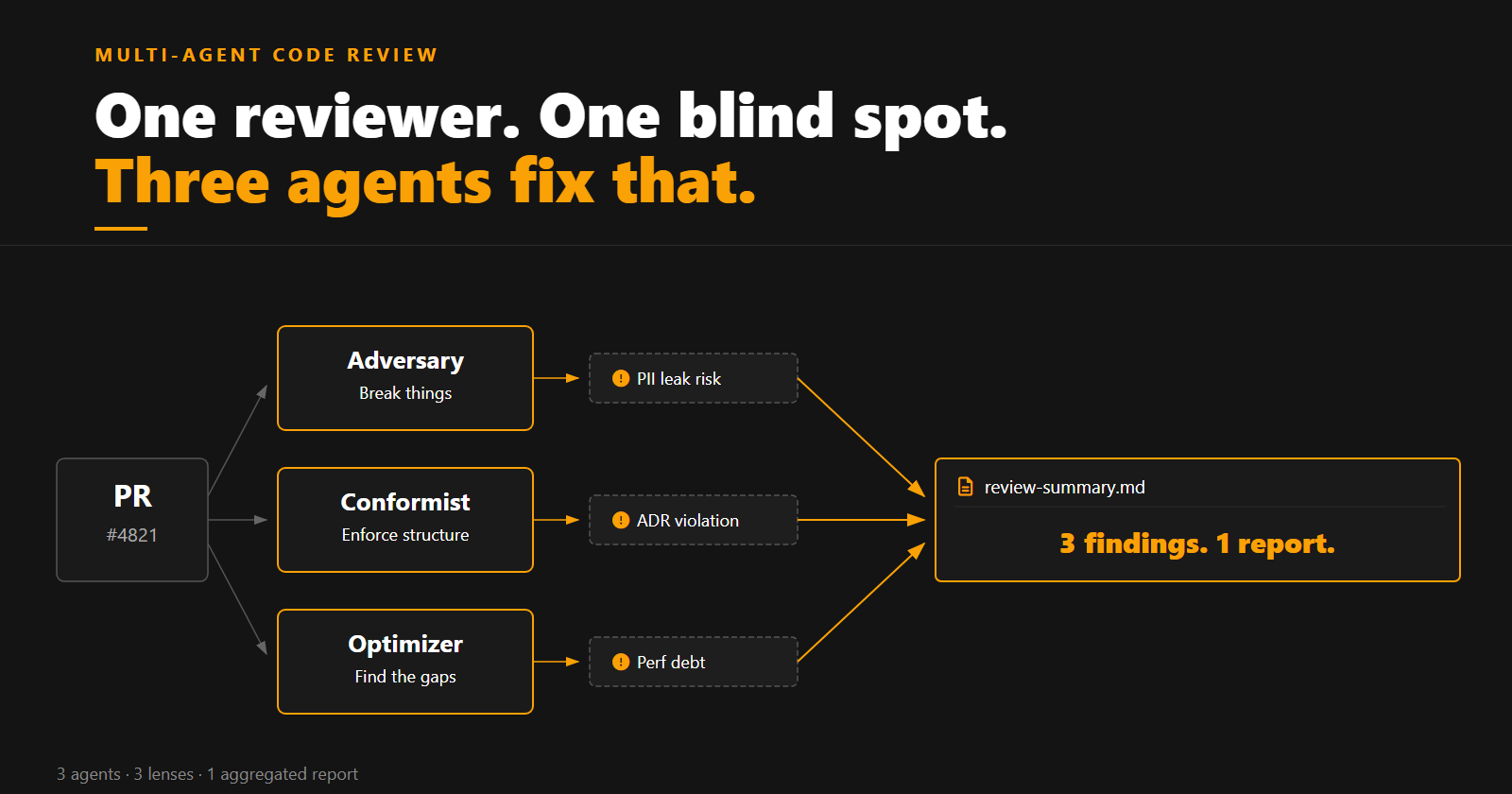

Three agents. Three lenses. One aggregated report.

The problem with a single AI reviewer is the same as the problem with self-review: it looks through one lens. In practice I run three agents in parallel, each with a distinct objective and a dedicated skill set. Not because they fight; they look at different things, and what one misses another finds.

In Cursor, I open a fresh context for each role and run them with dedicated skills. It’s not a fully automated pipeline. The value is in the separation of concerns, not the orchestration. Three agents, three lenses:

The Adversary searches for PII leaks, violates invariants, stresses concurrency edges. It probes the things generic scanners miss: a Redis Lua script that assumes atomic execution but doesn’t handle partial failure, or an event bus listener that forgets to checkpoint entirely. Its job is to break things in ways that look correct until they don’t.

The Conformist enforces DDD patterns, validates ADR compliance, checks naming conventions and architectural boundaries. Its job is to enforce structure.

The Optimizer ignores correctness entirely. It looks for performance debt, allocation pressure, and architectural inflection points. Its job is to find what the other two are too focused to see.

They don’t share objectives. Each outputs its findings in a structured format. I ask them to produce JSON so the results compose into a single review-summary.md alongside the feature. In a more automated setup, an orchestrator agent handles this: it spawns each reviewer, collects the outputs, and merges the report. That orchestrator is also what makes the pattern team-scalable: one trigger, consistent process, same output shape every time. Here’s what a finding looks like:

// security-review.json

{

"reviewer": "security",

"verdict": "BLOCK",

"finding": "PII Leakage: Silent Invariant Violation",

"location": "AuditService.cs:42",

"adr_violation": "ADR-007 [INV-1]",

"evidence": "Redact() invoked post-serialization; raw email embedded before scope",

"recommendation": "Invoke Redact() at the Service boundary prior to serialization."

}Three lenses, one aggregated report. When they surface the same issue independently, that’s the signal worth acting on.

What the agents can’t catch

Here’s the part that keeps me honest about what automation can and can’t do.

I was reviewing a LangGraph workflow built with AI assistance. The human-in-the-loop logic looked correct. The unit tests passed. The code review looked clean.

What I found was this:

# AI generated this; logic looks right, tests pass

if needs_human_input:

state["waiting"] = True

return stateThe problem: in LangGraph, stopping execution isn’t just a control-flow decision. interrupt() is a framework primitive that serializes the graph state to the checkpointer and marks the thread as suspended. Without it, when the workflow resumes, the graph re-executes from the start. Prior state is gone. The human-in-the-loop flow collapses silently: no error, no warning, just wrong behavior at the integration layer.

The fix is one line:

interrupt("Waiting for human approval")But the AI didn’t generate it because it solved the problem it was given: pause execution here. It didn’t know that “pause execution” and “pause execution in a way the framework can recover from” are two different problems.

I caught it in review. But I caught it because I knew the framework well enough to ask the right question. A reviewer who didn’t know LangGraph’s checkpointer semantics would have approved it.

That’s contextual blindness. And it points to something important: integration testing is a required gate, not optional. Unit tests validated the local behavior. Only an integration test with a persistent checkpointer would have caught the state loss. In Tier 3 workflows, integration tests belong in the constraint architecture alongside the ADR and the adversarial unit tests.

The developer's role in this model isn’t reading every line. It’s detecting invisible assumptions: the class of problem the AI made, not just this instance of it. That doesn’t scale through automation. It scales through experience.

What breaks this (and why I still use it)

No framework survives contact with reality unchanged.

Over-constraint is a real failure mode. If the invariants are too rigid, no valid implementation path exists. The system stalls. Constraint design has to be iterative: you write invariants, run the system, find where it stalls, and loosen what’s too tight.

Incompatible outputs happen when the security review recommends hardening and the performance review recommends refactoring in ways that pull against each other. The agents aren’t in conflict; they just found real tension in the design. My resolution framework: ADR-first, risk-weighted, with reversibility as the tiebreaker. If the decision can’t be cheaply reversed, the conservative path wins.

The upfront tax is real. This model requires more front-loaded structure before a single line of implementation is written. That’s intentional. The cost moves left. The blast radius shrinks. The alternative is paying a much larger cost later, in production, under pressure.

The implementation lifecycle for a Tier 3 feature looks like this:

- AI ingests feature spec and existing architecture constraints (including Mermaid architecture docs)

- AI drafts ADR with invariants and updates architecture documentation

- Developer approves ADR

- AI generates adversarial tests

- Developer approves tests

- Tests are checksum-locked

- AI generates implementation

- Agents review independently

- Integration tests run against a real environment

- Aggregated agent report produced

- Developer reviews flagged assumption edges only

That last point matters. The developer isn’t reviewing everything. The system surfaces what needs human judgment: the places where the AI made an assumption the framework can’t detect.

Trust is constructed. Not granted.

Generative AI increases solution velocity. That’s not in question.

It also increases the probability of what I’d call contextually correct but systemically wrong implementations. Code that solves the stated problem. Code that passes every check. Code that fails at the boundary between what was specified and what the system actually needed.

The missing layer isn’t better prompting. Better prompts don’t add integration tests. Better prompts don’t define invariants. Better prompts don’t catch interrupt() vs a manual state flag.

The missing layer is deterministic constraint architecture — defining the semantic perimeter within which AI is allowed to operate.

One more thing worth naming: the value of making this workflow deterministic and shareable. Our guardrails don’t live in individual engineers’ heads. They don’t disappear when someone leaves. New people can follow the same process without starting from zero. That’s not a minor operational detail. It’s the difference between a practice and a dependency on specific people.

In mission-critical systems, trust isn’t something you grant to a model. It’s something you construct around it.

Deterministically.

I write about engineering systems and AI in production. Follow along if this resonates.